Rules to Better Websites - Deployment - 5 Rules

Optimize your website's launch and updates by following essential deployment rules. This collection focuses on best practices for hosting, deployment tools, and manual processes, ensuring your web applications are efficiently managed and deployed without hitches.

When building a frontend application for your API, there's a point where the team needs to choose where and how to host the frontend application.Choosing the right hosting option is critical to scalability, available features, and flexibility of the application.

There are two primary ways to serve your frontend (e.g. Angular) application depending on where the frontend is hosted:

-

Option 1: Integrated with backend

The frontend is served by the backend API. Both frontend and backend are hosted on the same server. This is usually the easiest way and what the ASP.NET 8 Web Application starter Angular template has.

Pros & Cons:

- 🟢 Simple deployment story; backend and frontend will always be in the same version

- 🟢 Ability to put business logic before serving the frontend

- 🟢 Ability to serve server-side frontend

- 🟢 No cross-origin requests

- ❌ Difficult to scale

- ❌ Complicated development workflow for multi-team projects

- ❌ Backend deployment is tied to frontend deployment

-

Option 2: Externally hosted

The frontend is served standalone on its own separate host. The backend is hosted on a different server.

Pros & Cons:

- 🟢 Highly scalable

- 🟢 Access to CDN

- 🟢 Reduced backend load

- 🟢 Flexible technology options - e.g. swapping technology is easier

- ❌ More complicated deployment

- ❌ Higher cost

Based on the pros and cons of each option above, the recommended options are:

- For small to medium-sized projects with less complicated requirements: Option 1: Integrated with backend is the recommended option since it allows faster development without having to worry too much about infrastructure.

- For larger projects or projects with complicated requirements: Option 2: Externally hosted is the recommended option since it is more scalable and more flexible with infrastructure or technology changes.

-

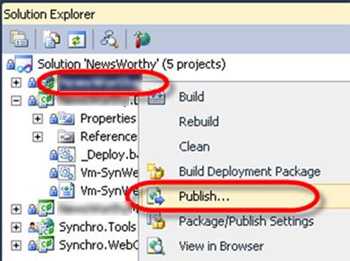

Publishing from Visual Studio is a convenient way to deploy a web application, but it relies on a single developer’s machine which can lead to problems. Deploying to production should be easily repeatable, and able to be performed from different machines.

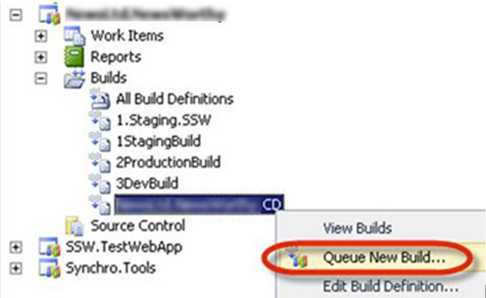

A better way to deploy is by using a defined Build in TFS.

Often, deployment is either done manually or as part of the build process. But deployment is a completely different step in your lifecycle. It's important that deployment is automated, but done separately from the build process.

There are two main reasons you should separate your deployment from your build process:

- You're not dependent on your servers for your build to succeed. Similarly, if you need to change deployment locations, or add or remove servers, you don't have to edit your build definition and risk breaking your build.

- You want to make sure you're deploying the *same* (tested) build of your software to each environment. If your deployment step is part of your build step, you may be rebuilding each time you deploy to a new environment.

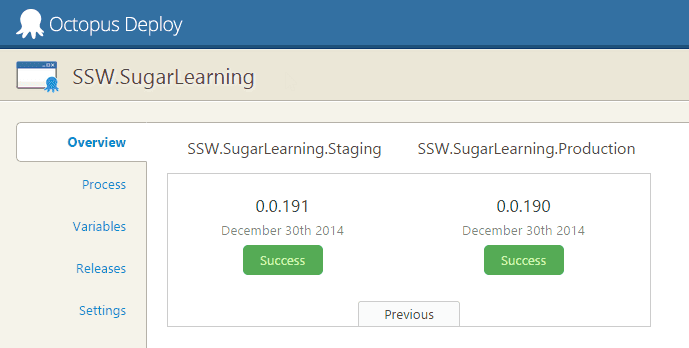

The best tool for deployments is Octopus Deploy.

Octopus Deploy allows you to package your projects in Nuget packages, publish them to the Octopus server, and deploy the package to your configured environments. Advanced users can also perform other tasks as part of a deployment like running integration and smoke tests, or notifying third-party services of a successful deployment.

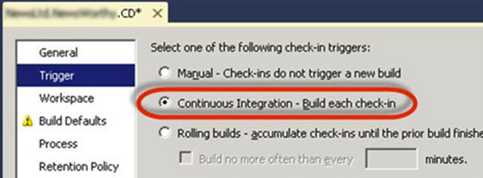

Version 2.6 of Octopus Deploy introduced the ability to create a new release and trigger a deployment when a new package is pushed to the Octopus server. Combined with Octopack, this makes continuous integration very easy from Team Foundation Server.

What if you need to sync files manually?

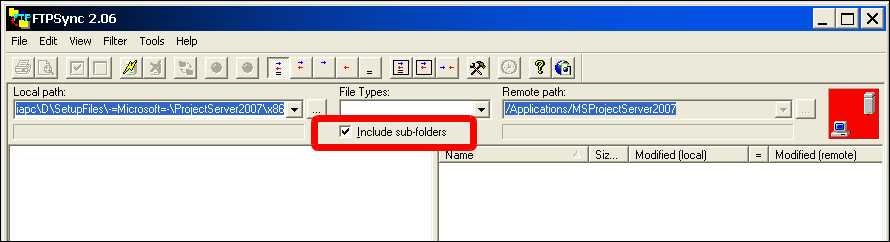

Then you should use an FTP client, which allows you to update files you have changed. FTP Sync and Beyond Compare are recommended as they compare all the files on the web server to a directory on a local machine, including date updated, file size and report which file is newer and what files will be overridden by uploading or downloading. you should only make changes on the local machine, so we can always upload files from the local machine to the web server.

This process allows you to keep a local copy of your live website on your machine - a great backup as a side effect.

Whenever you make changes on the website, as soon as they are approved they will be uploaded. You should tick the box that says "sync sub-folders", but when you click sync be careful to check any files that may be marked for a reverse sync. You should reverse the direction on these files. For most general editing tasks, changes should be uploaded as soon as they are done. Don't leave it until the end of the day. You won't be able to remember what pages you've changed. And when you upload a file, you should sync EVERY file in that directory. It's highly likely that un-synced files have been changed by someone, and forgotten to be uploaded. And make sure that deleted folders in the local server are deleted in the remote server.

If you are working on some files that you do not want to sync then put a _DoNotSyncFilesInThisFolder_XX.txt file in the folder. (Replace XX with your initials.) So if you see files that are to be synced (and you don't see this file) then find out who did it and tell them to sync. The reason you have this TXT file is so that people don't keep telling the web

NOTE: Immediately before deployment of an ASP.NET application with FTP Sync, you should ensure that the application compiles - otherwise it will not work correctly on the destination server (even though it still works on the development server).

Imagine you're developing a search feature for a multilingual website. When a user searches for a term like "résumé", but the database contains variations like "resume" without accented characters, it leads to missed matches and incomplete search results. Furthermore, this lack of normalization can introduce inconsistencies in sorting and filtering, complicating data analysis and user navigation.

Implementing character normalization by converting accented and special characters to their closest unaccented equivalents allows terms like "résumé" to match "resume" enhancing search accuracy and overall user experience by addressing these multilingual nuances.

This is especially important for internationalization and for sites to serve users worldwide effectively, regardless of linguistic differences.

Handling diacritics in JS

JavaScript provides us with a handy method called normalize() that helps us tackle this problem effortlessly.

The method takes an optional parameter specifying the normalization form. The most common form is the Unicode Normalization Form Canonical Decomposition (NFD), which is suitable for most use cases.

Let's see how we can use it:

const accentedString = "résumé"; const normalizedString = accentedString .normalize("NFD") .replace(/\p{Diacritic}/gu, ""); console.log(normalizedString); // Output: resumeThere are 2 things happening in the code above:

- normalize() converts strings into the NFD. This form decomposes composite characters into the base character and combining characters. E.g. 'é' would be decomposed into 'e' + '´'

- replace(/\p{Diacritic}/gu, "") matches any character with a diacritic mark (accent) and replaces it with an empty string

Handling diacritics in .NET

You can also achieve the same result in .NET using a similar method Normalize():

string accentedString = "résumé"; string normalizedString = RemoveDiacritics(accentedString); static string RemoveDiacritics(string accentedString) { Regex Diacritics = new Regex(@"\p{M}"); // A regex that matches any diacritic. string decomposedString = accentedString.Normalize(NormalizationForm.FormD); // Equivalent to NFD return Diacritics.Replace(decomposedString, string.Empty); } Console.WriteLine(normalizedString); // Output: resumeVoila! With just two line of code, we've transformed the accented "résumé" into its standard English form "resume". Now, the search functionality can accurately match user queries regardless of accents.

Note: While this method often ensures uniformity and handles characters with multiple representations, it may not function as expected for non-diacritic characters such as 'æ' or 'ø'. In such cases, it may be necessary to use a library or manually handle these characters.

When managing dependencies in a javascript project, selecting the right versioning symbols like

^,~, or*can make a big difference in your project's stability. This guide helps you understand what these symbols mean and how to use them effectively.Why version symbols matter

Dependency management is critical for maintaining stability, security, and compatibility in your project. Using the wrong symbol can result in unexpected updates, breaking changes, or missing out on important fixes.

Semantic versioning (SemVer) refresher

npm uses Semantic Versioning (SemVer), structured as:

"dependencies": { "react" : "MAJOR.MINOR.PATCH" }- MAJOR: Breaking changes

- MINOR: Backward-compatible new features

- PATCH: Backward-compatible bug fixes

Understanding SemVer helps you understand the common version symbols below. Learn more about semantic versioning

🎯 Symbols cheat sheet

1. Caret (

^)Allows minor and patch updates.

Example:

^1.0.0matches>=1.0.0and<2.0.0.2. Tilde (

~)Allows only patch updates.

Example:

~1.0.0matches>=1.0.0and<1.1.0.3. Wildcard (

*)Matches any version.

Example:

*installs the latest version.What happens behind the scenes?

When you run

npm install, npm resolves the version of the dependency based on the range specified in yourpackage.json. It installs the latest version that satisfies the range and then locks that exact version in thepackage-lock.jsonfile. This ensures future installs use the same version, even if newer versions are released (unless you deletenode_modulesor thepackage-lock.jsonfile and re runnpm install).💡 Best practices

- Use

^(caret) only when you trust the package maintainers - Use

~(tilde) when you want only patch updates for bug fixes - Avoid wildcards (

*) for production projects - Lock exact versions for critical applications to ensure stability

By understanding these symbols, you can avoid unexpected error and keep your project on track! 🚀

For more details about npm version selection and symbols, check out the official npm documentation on semver ranges.